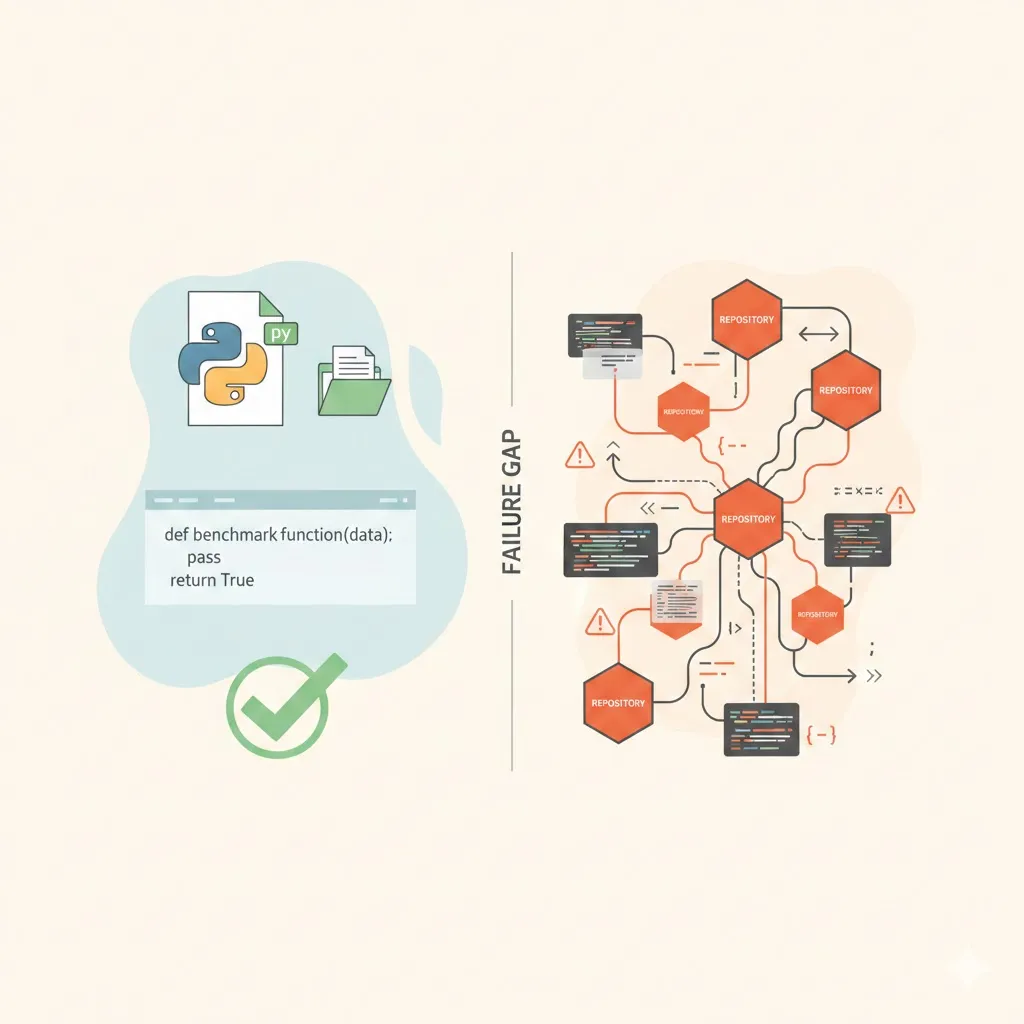

Why Standard Coding AI Benchmarks Fail for Cross-Repository Systems

Most coding LLM benchmarks were never designed for the kind of system you’re actually building.

If your model just needs to fill in a function body given a clear signature and docstring, benchmarks like HumanEval and MBPP are reasonable proxies. But if you’re building a GraphRAG-powered assistant that reasons across tens of thousands of files, multiple repositories, and evolving services, those benchmarks will tell you almost nothing about whether your system actually works.

This post explains why—and what you should measure instead.

What Standard Benchmarks Really Measure

Benchmarks like HumanEval, MBPP, and SWE-Bench share a common assumption: the problem is self-contained in a small, static context.

HumanEval / MBPP

You get:

- A function signature

- A docstring or natural language description

And the model:

- Generates the function body in a single file

The evaluation:

- Runs unit tests against that function

None of this requires retrieval. The “knowledge” is either:

- Memorized parametric knowledge inside the model weights, or

- Directly expressed in the prompt.

Even SWE-Bench, which uses real GitHub issues and multi-file patches, is still fundamentally a single-repository setting. The model is evaluated on whether it can modify files within a given codebase to pass tests, not on whether it can retrieve and integrate context from other repositories or services.

These benchmarks are essential for measuring core code generation and reasoning, but they are almost blind to retrieval quality, especially in realistic, cross-repo settings.

Your Reality: Cross-Repository GraphRAG at Scale

A GraphRAG system changes the game. Instead of “generate a function in this file,” your system operates more like:

“Given this question about our platform, find and reason over the relevant code, configs, schemas, and policies scattered across dozens of repositories.”

The assumptions behind standard benchmarks fall apart:

| Benchmark Assumption | GraphRAG Reality |

|---|---|

| Single file context | 25 repositories, 50,000+ files |

| Self-contained problems | Cross-service dependencies |

| Language-homogeneous | Polyglot (Python, TypeScript, SQL, YAML, etc.) |

| Static evaluation | Repositories evolve over time |

| Function-level generation | Multi-file, sometimes multi-repo modifications |

A GraphRAG architecture (like Microsoft’s GraphRAG designs) organizes code and artifacts into a graph of entities and relationships—services, APIs, database tables, configuration, policies, and more—then uses retrieval over that graph as the first-class operation. Generation is conditioned on what’s retrieved.

That’s a fundamentally different task from “complete this function in foo.py.”

Why Repository-Level Benchmarks Still Aren’t Enough

You might think: OK, but what about SWE-Bench and other repository-level benchmarks? Those are more realistic, right?

They’re a step forward, but still not enough.

Work like CodeRAG-Bench has shown a 9-point performance gap between:

- Oracle retrieval: The benchmark hands the model the exact “gold” documents it needs

- Realistic retrieval: The model has to find relevant files via RAG, even with a strong model like GPT-4o

In other words: even at single-repository scale, retrieval is hard. Once you move to cross-repository retrieval, everything gets worse:

- The retrieval space grows from thousands of files to tens of thousands across multiple repos

- Relevant information may be split across:

- Backend services

- Frontend apps

- Shared libraries

- Database schemas

- Infrastructure and security configs

Your GraphRAG context graph and retrieval pipeline are built precisely to navigate this complexity. But no existing benchmark is designed to test whether your system actually does this well.

Unique Failure Modes in Cross-Repository Systems

Cross-repository queries introduce failure modes that standard benchmarks simply cannot surface.

1. Entity Resolution Across Codebases

The same conceptual entity—User, Authentication, Payment, etc.—shows up in many places:

user-servicefor core identity and authweb-frontendmanaging sessions and tokensbilling-servicetying users to subscriptions or invoicescompliancerepository encoding security constraints

A query like “explain the user authentication flow” isn’t just about one function or one service. A good GraphRAG pipeline must resolve:

- Which repositories actually define the relevant logic

- How each repository’s representation of “user” links to the others

- How the flow spans:

- Backend auth

- Frontend session management

- Database schemas

- Security and compliance policies

Standard benchmarks have no notion of entity resolution across heterogeneous codebases. They assume a single, local context where entities live in one place.

2. Dependency Graph Complexity

Real systems are layered and interconnected:

- Backend repositories import shared connectors or libraries

- Frontend apps call backend APIs or GraphQL endpoints

- SQL schemas and migrations constrain both

- Security policies and infra configs wrap the entire stack

A GraphRAG system builds (or approximates) a dependency graph that says:

- Service A calls API B in Service C

- That API touches table T with schema S

- That table is subject to policy P

Correct retrieval requires walking these paths:

- Starting from a user query

- Identifying the relevant services, APIs, tables, and configs

- Pulling in the right slices of each, without drowning the model in irrelevant code

Standard benchmarks have no mechanism to test whether the system walks the right edges in this graph. At best, they test “did you edit the right file in this one repo?“

3. Version and Branch Coherence

In a multi-repo, actively developed system:

- Backend is on commit A

- Frontend is on commit B

- Schema migrations might be on a different timeline

- Feature branches may be deployed in partial combinations

A GraphRAG system that naïvely pulls:

- Backend code from

main, - Frontend code from an experimental branch,

- An outdated SQL migration

…will produce an internally incoherent context, leading to incorrect reasoning or suggestions.

Evaluating version coherence means asking:

- Did the system retrieve context that is mutually consistent?

- Are the backend and frontend artifacts from compatible versions?

- Are the schemas and configs aligned with the code they’re supposed to support?

Standard benchmarks almost never model time, branches, or deployment states. They treat the repository as a fixed snapshot.

Why You Can’t Use Off-the-Shelf Benchmarks as Primary Evaluation

Given all of this, standard benchmarks play at most a supporting role:

They are good sanity checks:

- Has your GraphRAG pipeline or prompt orchestration accidentally broken basic code generation?

- Is the underlying model still competent at implementing straightforward functions or small patches?

They are not meaningful primary metrics:

- Passing HumanEval says nothing about whether your system can:

- Find the right files across 25 repositories

- Follow a multi-hop dependency chain

- Maintain version consistency

- Ground its answer in retrieved context

Relying on HumanEval, MBPP, or SWE-Bench scores to measure a GraphRAG system is like using a single-unit test to validate a distributed system: it might fail if things are badly broken, but it won’t tell you if the real system behavior is correct.

What Your Evaluation Must Measure

To evaluate a cross-repository GraphRAG system, you need metrics that align with its actual responsibilities.

At a minimum:

- Cross-repository retrieval precision: Did we find the right files across multiple repos?

- Relationship traversal accuracy: Did we follow the correct dependency paths?

- Context coherence: Is the retrieved context internally consistent?

- Generation grounding: Does the output reference retrieved context, not hallucinated knowledge?

Beyond these, consider additional evaluative dimensions:

- Time- and version-coherence metrics: Are artifacts from compatible commits/branches?

- End-to-end task success: Does the system actually accomplish cross-repo tasks (e.g., explain a flow, patch multiple files across repos) with a correct, consistent narrative?

- Robustness to partial retrieval: How gracefully does the system perform when some relevant files are missing or lagging behind in a branch?

Structure your evaluation plan around these axes, and treat standard single-repo benchmarks as ancillary sanity checks rather than primary determinants of success.

Key References

- CodeRAG-Bench findings on retrieval-generation gaps

- Microsoft GraphRAG architecture overview

- GraphRAG retrieval pipeline design

These references provide the grounding for understanding why cross-repository context changes everything and how a GraphRAG system is architected to navigate it.