Designing a Three-Layer Evaluation Framework for Cross-Repository GraphRAG

Standard evaluation approaches treat retrieval-augmented generation as a monolithic system: query goes in, answer comes out, measure quality. This works poorly for GraphRAG systems operating across multiple repositories, where failures can occur at distinct stages with very different root causes.

A retrieval failure looks different from a reasoning failure, which looks different from a generation failure. Conflating them makes debugging nearly impossible and optimization directionless.

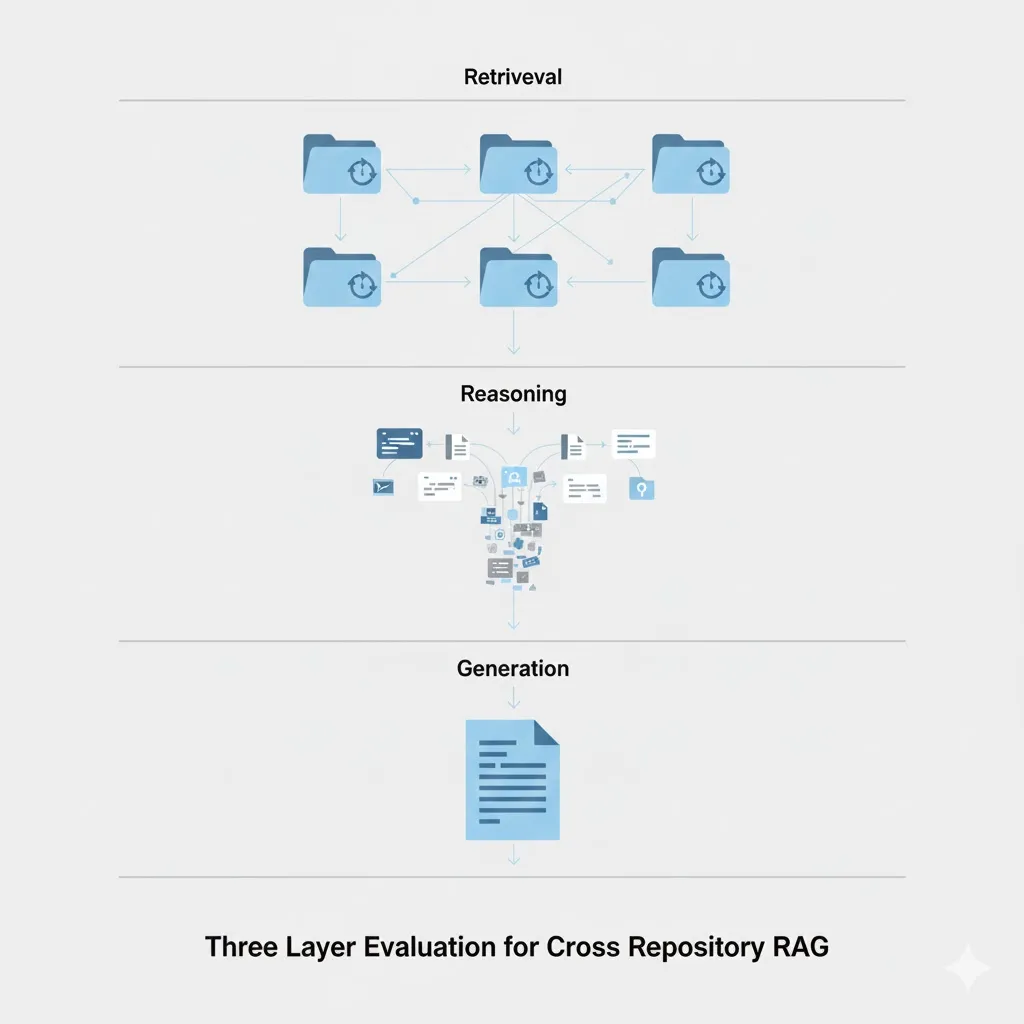

This post introduces a three-layer evaluation architecture that separates these concerns:

- Retrieval Layer: Can the system find the right files and chunks across repositories?

- Reasoning Layer: Can the system correctly combine information from multiple sources?

- Generation Layer: Is the final output correct and grounded in retrieved context?

Each layer has distinct metrics, target thresholds, and evaluation methodologies. Together, they provide the diagnostic granularity you need to systematically improve a cross-repository GraphRAG system.

Why Three Layers?

Consider a failure case: the system produces an incorrect explanation of your authentication flow. Without layer separation, you face a debugging maze:

- Did retrieval miss the relevant auth service files?

- Did retrieval find them, but reasoning failed to connect frontend and backend components?

- Did reasoning succeed, but generation hallucinated implementation details?

Each root cause requires a different intervention:

| Failure Layer | Symptom | Intervention |

|---|---|---|

| Retrieval | Relevant files never reached the context | Improve embeddings, graph construction, or query expansion |

| Reasoning | Files retrieved but relationships misunderstood | Improve context ordering, add explicit relationship prompts |

| Generation | Context correct but output fabricated details | Adjust generation prompts, add grounding constraints |

A three-layer framework surfaces which layer failed, enabling targeted improvement rather than blind experimentation.

Layer 1: Retrieval Evaluation

What You’re Measuring

Can the GraphRAG system find the correct files and chunks across 25 repositories given a natural language query?

This is the foundation. If retrieval fails, no amount of reasoning or generation sophistication will save you. The system cannot synthesize information it never retrieved.

Core Metrics

| Metric | Definition | Target for Production |

|---|---|---|

| NDCG@k | Normalized discounted cumulative gain at k documents | > 0.75 for k=10 |

| Recall@k | Fraction of relevant documents in top k | > 0.85 for k=20 |

| MRR | Mean reciprocal rank of first relevant document | > 0.6 |

| Cross-Repo Precision | Fraction of retrieved repos that contain relevant docs | > 0.7 |

| Relationship Traversal Accuracy | Did we follow the correct graph edges? | > 0.8 |

NDCG@k captures ranking quality: are the most relevant documents at the top? For cross-repository queries, this matters because context windows are limited—you need the best files first.

Recall@k measures coverage: did we find all the relevant files within the top k? A system with high precision but low recall will miss critical context.

MRR focuses on the first relevant result. For many queries, finding one highly relevant file quickly is more important than perfect ranking of the full list.

Cross-Repo Precision is specific to multi-repository settings. If a query requires files from auth-service and user-frontend, but retrieval returns files from billing-service and analytics, you have a repository-level targeting problem distinct from file-level ranking.

GraphRAG-Specific: Relationship Traversal Accuracy

Standard retrieval metrics assume a flat document collection. Your GraphRAG system uses a knowledge graph, so you need metrics that evaluate graph traversal quality.

Consider a query: “How does user authentication flow from the frontend to the database?”

The correct retrieval path might traverse:

[frontend/auth/LoginForm.tsx]

--calls--> [backend/api/auth.py]

--imports--> [backend/services/user_service.py]

--queries--> [database/schemas/users.sql]Did your system follow this path, or did it jump to unrelated nodes?

from typing import List, Set, Tuple

def graph_traversal_precision(

retrieved_paths: List[List[str]], # Paths through the graph

gold_paths: List[List[str]] # Annotated correct paths

) -> float:

"""

Measure whether the system traversed correct relationships.

Example gold path:

[user_service/models.py] --imports--> [shared/types.py]

--defines--> [User type] --referenced_by--> [frontend/api.ts]

"""

correct_edges = 0

total_edges = sum(len(p) - 1 for p in gold_paths)

for gold_path in gold_paths:

for retrieved_path in retrieved_paths:

correct_edges += count_matching_edges(gold_path, retrieved_path)

return correct_edges / total_edges if total_edges > 0 else 0.0

def count_matching_edges(

gold_path: List[str],

retrieved_path: List[str]

) -> int:

"""

Count how many edges in the gold path appear in the retrieved path.

An edge is a (source, target) pair representing a graph relationship.

"""

gold_edges: Set[Tuple[str, str]] = set()

for i in range(len(gold_path) - 1):

gold_edges.add((gold_path[i], gold_path[i + 1]))

retrieved_edges: Set[Tuple[str, str]] = set()

for i in range(len(retrieved_path) - 1):

retrieved_edges.add((retrieved_path[i], retrieved_path[i + 1]))

return len(gold_edges.intersection(retrieved_edges))This metric captures something NDCG cannot: whether the system understands the relationships between code artifacts, not just their individual relevance.

Cross-Repository Retrieval Precision

For multi-repository systems, you also need repository-level metrics:

from typing import Dict, List, Set

def cross_repo_precision(

retrieved_docs: List[Dict[str, str]], # Each doc has 'repo' and 'path'

relevant_repos: Set[str] # Ground truth relevant repositories

) -> float:

"""

What fraction of repositories we retrieved from actually contain relevant docs?

High cross-repo precision means we're targeting the right repositories.

Low precision means we're pulling from irrelevant codebases.

"""

retrieved_repos = set(doc['repo'] for doc in retrieved_docs)

if not retrieved_repos:

return 0.0

correct_repos = retrieved_repos.intersection(relevant_repos)

return len(correct_repos) / len(retrieved_repos)

def cross_repo_recall(

retrieved_docs: List[Dict[str, str]],

relevant_repos: Set[str]

) -> float:

"""

What fraction of relevant repositories did we retrieve from?

High cross-repo recall means we're not missing entire codebases.

"""

retrieved_repos = set(doc['repo'] for doc in retrieved_docs)

if not relevant_repos:

return 1.0 # No relevant repos means trivially complete

covered_repos = retrieved_repos.intersection(relevant_repos)

return len(covered_repos) / len(relevant_repos)A system might have excellent file-level NDCG within repositories it searches, but if it never searches the right repositories, overall quality collapses.

Layer 2: Reasoning Evaluation

What You’re Measuring

Given retrieved context from multiple repositories, can the system combine information correctly?

Retrieval might succeed—all the right files are in context—but the system still fails to reason across them. This layer evaluates the synthesis capability.

Core Metrics

| Metric | Definition | Target |

|---|---|---|

| Context Coherence Score | Are retrieved chunks from consistent versions/branches? | > 0.9 |

| Information Integration | Does the response synthesize multiple sources? | > 0.7 |

| Conflict Resolution | When sources disagree, is the resolution correct? | > 0.6 |

| Completeness | Are all relevant aspects of the query addressed? | > 0.75 |

Context Coherence Score

Cross-repository systems face a unique challenge: retrieved files might come from incompatible versions. The backend might be from main, the frontend from a feature branch, and the schema from an outdated commit.

from dataclasses import dataclass

from typing import List, Optional

from datetime import datetime

@dataclass

class RetrievedChunk:

repo: str

path: str

commit_sha: str

branch: str

timestamp: datetime

content: str

def context_coherence_score(

chunks: List[RetrievedChunk],

compatibility_matrix: dict # Defines which versions work together

) -> float:

"""

Measure whether retrieved chunks come from mutually compatible versions.

A coherent context has all chunks from versions that were

actually deployed or tested together.

"""

if len(chunks) <= 1:

return 1.0

compatible_pairs = 0

total_pairs = 0

for i, chunk_a in enumerate(chunks):

for chunk_b in chunks[i + 1:]:

total_pairs += 1

if are_versions_compatible(chunk_a, chunk_b, compatibility_matrix):

compatible_pairs += 1

return compatible_pairs / total_pairs if total_pairs > 0 else 1.0

def are_versions_compatible(

chunk_a: RetrievedChunk,

chunk_b: RetrievedChunk,

compatibility_matrix: dict

) -> bool:

"""

Check if two chunks come from compatible versions.

Compatibility can be defined by:

- Same branch

- Commits within a time window

- Explicit compatibility mapping from CI/CD

"""

# Same repo, same branch is always compatible

if chunk_a.repo == chunk_b.repo and chunk_a.branch == chunk_b.branch:

return True

# Check explicit compatibility matrix

key = (chunk_a.repo, chunk_a.commit_sha, chunk_b.repo, chunk_b.commit_sha)

if key in compatibility_matrix:

return compatibility_matrix[key]

# Fallback: commits within 24 hours on main branches are likely compatible

if chunk_a.branch == 'main' and chunk_b.branch == 'main':

time_diff = abs((chunk_a.timestamp - chunk_b.timestamp).total_seconds())

return time_diff < 86400 # 24 hours

return FalseInformation Integration Assessment

Does the response actually use multiple sources, or does it rely on a single file and ignore the rest?

from typing import List, Set

import re

def information_integration_score(

response: str,

retrieved_chunks: List[RetrievedChunk],

min_sources_expected: int = 3

) -> float:

"""

Measure whether the response integrates information from multiple sources.

A well-integrated response should reference or use content from

multiple retrieved chunks, not just one.

"""

sources_used: Set[str] = set()

for chunk in retrieved_chunks:

# Check if response contains identifiers, function names,

# or unique strings from this chunk

chunk_identifiers = extract_identifiers(chunk.content)

for identifier in chunk_identifiers:

if identifier in response and len(identifier) > 4: # Avoid short matches

sources_used.add(f"{chunk.repo}/{chunk.path}")

break

# Score based on how many sources we expected vs. used

return min(len(sources_used) / min_sources_expected, 1.0)

def extract_identifiers(code: str) -> List[str]:

"""Extract function names, class names, and variable names from code."""

# Match common identifier patterns

patterns = [

r'def (\w+)\s*\(', # Python functions

r'class (\w+)', # Classes

r'function (\w+)\s*\(', # JavaScript functions

r'const (\w+)\s*=', # JavaScript constants

r'CREATE TABLE (\w+)', # SQL tables

]

identifiers = []

for pattern in patterns:

identifiers.extend(re.findall(pattern, code))

return identifiersAggregation Challenge Evaluation

The most rigorous test of reasoning is an “aggregation challenge”—a query that requires multiple sources to answer correctly.

Example Query: “Explain the data flow from user registration to first purchase”

Required sources:

- Frontend: registration form component

- Backend: user creation endpoint

- Database: user and order schemas

- Backend: purchase processing service

- Connector: payment gateway integration

Evaluation criteria:

from dataclasses import dataclass

from typing import List, Optional

from enum import Enum

class IntegrationError(Enum):

INVENTED_STEP = "invented_step" # Made up intermediate components

OMITTED_COMPONENT = "omitted" # Missed a critical piece

MISATTRIBUTION = "misattribution" # Assigned functionality to wrong service

INCORRECT_ORDER = "incorrect_order" # Steps in wrong sequence

@dataclass

class AggregationEvaluation:

query: str

required_components: List[str]

response: str

components_addressed: List[str]

errors: List[IntegrationError]

@property

def completeness_score(self) -> float:

"""Fraction of required components addressed."""

return len(self.components_addressed) / len(self.required_components)

@property

def accuracy_score(self) -> float:

"""Penalize for integration errors."""

error_penalty = len(self.errors) * 0.15

return max(0.0, 1.0 - error_penalty)

@property

def overall_score(self) -> float:

"""Combined reasoning quality score."""

return (self.completeness_score + self.accuracy_score) / 2

def evaluate_aggregation_challenge(

response: str,

required_components: List[str],

component_descriptions: dict, # What each component should do

llm_judge # LLM for evaluation

) -> AggregationEvaluation:

"""

Use an LLM judge to evaluate whether the response correctly

integrates information from all required components.

"""

evaluation_prompt = f"""

Evaluate whether this response correctly explains a data flow.

Required components that MUST be addressed:

{format_components(required_components, component_descriptions)}

Response to evaluate:

{response}

For each required component:

1. Is it mentioned or addressed? (yes/no)

2. Is it attributed to the correct service/file? (yes/no/na)

3. Is its role in the flow accurate? (yes/no/na)

Also identify any errors:

- INVENTED_STEP: The response describes components that don't exist

- OMITTED_COMPONENT: A required component is missing

- MISATTRIBUTION: Functionality is assigned to the wrong service

- INCORRECT_ORDER: The sequence of steps is wrong

Return structured evaluation.

"""

# Call LLM judge and parse response

evaluation = llm_judge.evaluate(evaluation_prompt)

return parse_aggregation_evaluation(evaluation, required_components)Layer 3: Generation Evaluation

What You’re Measuring

Is the final output correct and grounded in retrieved context?

Even with perfect retrieval and reasoning, generation can fail:

- The model might hallucinate details not in context

- Code might have syntax errors or logical bugs

- Explanations might be correct but poorly expressed

Core Metrics

| Metric | Definition | Target |

|---|---|---|

| Factual Correctness | Does the code/explanation work? | > 0.85 |

| Groundedness | Is every claim traceable to retrieved context? | > 0.9 |

| Hallucination Rate | Fraction of claims not supported by context | < 0.1 |

| Repository Attribution | Are sources correctly cited? | > 0.95 |

Execution-Based Evaluation for Code

For code generation tasks, the ultimate test is execution. Does the code actually work?

from dataclasses import dataclass

from typing import List, Dict, Any, Optional

from enum import Enum

import subprocess

import tempfile

import os

class ExecutionStatus(Enum):

SUCCESS = "success"

SYNTAX_ERROR = "syntax_error"

RUNTIME_ERROR = "runtime_error"

TEST_FAILURE = "test_failure"

TIMEOUT = "timeout"

@dataclass

class TestCase:

name: str

inputs: Dict[str, Any]

expected_output: Any

timeout_seconds: float = 30.0

@dataclass

class ExecutionResult:

status: ExecutionStatus

tests_passed: int

tests_total: int

error_message: Optional[str] = None

execution_time_ms: Optional[float] = None

@property

def pass_rate(self) -> float:

return self.tests_passed / self.tests_total if self.tests_total > 0 else 0.0

def execute_generated_code(

generated_code: str,

test_cases: List[TestCase],

repository_context: Dict[str, str], # Mock imports from your repos

language: str = "python"

) -> ExecutionResult:

"""

Execute generated code with mocked cross-repository dependencies.

This allows testing code that imports from multiple repositories

without requiring the full repository setup.

"""

with tempfile.TemporaryDirectory() as tmpdir:

# Set up mock modules for cross-repo imports

setup_mock_imports(tmpdir, repository_context)

# Write generated code

code_path = os.path.join(tmpdir, "generated_code.py")

with open(code_path, "w") as f:

f.write(generated_code)

# Write test harness

test_path = os.path.join(tmpdir, "test_generated.py")

test_code = generate_test_harness(test_cases)

with open(test_path, "w") as f:

f.write(test_code)

# Execute tests

try:

result = subprocess.run(

["python", test_path],

cwd=tmpdir,

capture_output=True,

text=True,

timeout=max(tc.timeout_seconds for tc in test_cases)

)

return parse_test_output(result, test_cases)

except subprocess.TimeoutExpired:

return ExecutionResult(

status=ExecutionStatus.TIMEOUT,

tests_passed=0,

tests_total=len(test_cases),

error_message="Execution timed out"

)

except Exception as e:

return ExecutionResult(

status=ExecutionStatus.RUNTIME_ERROR,

tests_passed=0,

tests_total=len(test_cases),

error_message=str(e)

)

def setup_mock_imports(tmpdir: str, repository_context: Dict[str, str]):

"""

Create mock modules that simulate imports from other repositories.

repository_context maps import paths to mock implementations:

{

"auth_service.models": "class User: ...",

"shared.types": "UserID = str",

"database.connection": "def get_db(): return MockDB()"

}

"""

for import_path, mock_code in repository_context.items():

# Convert import path to directory structure

parts = import_path.split(".")

module_dir = os.path.join(tmpdir, *parts[:-1])

os.makedirs(module_dir, exist_ok=True)

# Create __init__.py files

for i in range(len(parts) - 1):

init_path = os.path.join(tmpdir, *parts[:i+1], "__init__.py")

if not os.path.exists(init_path):

with open(init_path, "w") as f:

f.write("")

# Write mock module

module_path = os.path.join(module_dir, f"{parts[-1]}.py")

with open(module_path, "w") as f:

f.write(mock_code)Groundedness Evaluation

For explanation tasks, execution isn’t possible. Instead, we measure whether every claim in the response is traceable to retrieved context.

GROUNDEDNESS_RUBRIC = """

Evaluate whether each factual claim in the response is supported by the provided context.

## Instructions

1. Extract all factual claims from the response

- A claim is any statement that could be true or false

- Ignore hedged language ("might", "could", "possibly")

- Include technical details (function names, file paths, behaviors)

2. For each claim, find supporting evidence in the context

- Search all provided context chunks

- Note which chunk (if any) supports the claim

3. Rate each claim's support level:

- DIRECT: Exact or near-exact match in context

- INFERRED: Logically derivable from context (e.g., "A calls B" + "B returns X" → "A gets X")

- UNSUPPORTED: No basis in provided context

4. Calculate groundedness score:

- Score = (DIRECT + INFERRED) / Total Claims

- A response is grounded if score > 0.9

## Output Format

For each claim:Claim: [extracted claim] Support: [DIRECT/INFERRED/UNSUPPORTED] Evidence: [quote from context or “none”]

Final Score: [X/Y claims supported] = [percentage]

Grounded: [YES/NO]

"""

from dataclasses import dataclass

from typing import List, Tuple

from enum import Enum

class SupportLevel(Enum):

DIRECT = "direct"

INFERRED = "inferred"

UNSUPPORTED = "unsupported"

@dataclass

class ClaimEvaluation:

claim: str

support_level: SupportLevel

evidence: Optional[str]

source_chunk: Optional[str]

@dataclass

class GroundednessResult:

claims: List[ClaimEvaluation]

@property

def total_claims(self) -> int:

return len(self.claims)

@property

def supported_claims(self) -> int:

return sum(

1 for c in self.claims

if c.support_level in (SupportLevel.DIRECT, SupportLevel.INFERRED)

)

@property

def groundedness_score(self) -> float:

if self.total_claims == 0:

return 1.0

return self.supported_claims / self.total_claims

@property

def hallucination_rate(self) -> float:

return 1.0 - self.groundedness_score

@property

def is_grounded(self) -> bool:

return self.groundedness_score >= 0.9

def evaluate_groundedness(

response: str,

context_chunks: List[RetrievedChunk],

llm_judge

) -> GroundednessResult:

"""

Use an LLM judge to evaluate response groundedness.

"""

context_formatted = "\n\n---\n\n".join([

f"Source: {chunk.repo}/{chunk.path}\n{chunk.content}"

for chunk in context_chunks

])

prompt = f"""

{GROUNDEDNESS_RUBRIC}

## Context

{context_formatted}

## Response to Evaluate

{response}

## Evaluation

"""

evaluation = llm_judge.evaluate(prompt)

return parse_groundedness_result(evaluation)Repository Attribution Accuracy

When the system cites sources, are the citations correct?

from typing import List, Dict, Set

import re

@dataclass

class Citation:

repo: str

path: str

claim: str # What the citation supposedly supports

@dataclass

class AttributionResult:

total_citations: int

correct_citations: int

incorrect_citations: List[Tuple[Citation, str]] # (citation, error reason)

@property

def attribution_accuracy(self) -> float:

if self.total_citations == 0:

return 1.0

return self.correct_citations / self.total_citations

def evaluate_attribution(

response: str,

context_chunks: List[RetrievedChunk],

citation_pattern: str = r'\[([^\]]+)\]' # e.g., [repo/path/file.py]

) -> AttributionResult:

"""

Verify that citations in the response point to correct sources.

"""

# Extract citations from response

citations = extract_citations(response, citation_pattern)

# Build index of available context

context_index: Dict[str, RetrievedChunk] = {}

for chunk in context_chunks:

key = f"{chunk.repo}/{chunk.path}"

context_index[key] = chunk

correct = 0

incorrect = []

for citation in citations:

citation_key = f"{citation.repo}/{citation.path}"

if citation_key not in context_index:

incorrect.append((citation, "Source not in retrieved context"))

continue

chunk = context_index[citation_key]

if not verify_claim_in_chunk(citation.claim, chunk.content):

incorrect.append((citation, "Claim not supported by cited source"))

continue

correct += 1

return AttributionResult(

total_citations=len(citations),

correct_citations=correct,

incorrect_citations=incorrect

)Putting It All Together: The Evaluation Pipeline

Here’s how the three layers combine into a complete evaluation pipeline:

from dataclasses import dataclass

from typing import List, Optional

@dataclass

class LayeredEvaluationResult:

# Layer 1: Retrieval

ndcg_at_10: float

recall_at_20: float

mrr: float

cross_repo_precision: float

graph_traversal_precision: float

# Layer 2: Reasoning

context_coherence: float

information_integration: float

completeness: float

# Layer 3: Generation

factual_correctness: float # From execution or manual eval

groundedness: float

hallucination_rate: float

attribution_accuracy: float

@property

def retrieval_score(self) -> float:

"""Weighted average of retrieval metrics."""

return (

0.3 * self.ndcg_at_10 +

0.25 * self.recall_at_20 +

0.15 * self.mrr +

0.15 * self.cross_repo_precision +

0.15 * self.graph_traversal_precision

)

@property

def reasoning_score(self) -> float:

"""Weighted average of reasoning metrics."""

return (

0.4 * self.context_coherence +

0.35 * self.information_integration +

0.25 * self.completeness

)

@property

def generation_score(self) -> float:

"""Weighted average of generation metrics."""

return (

0.35 * self.factual_correctness +

0.35 * self.groundedness +

0.15 * (1 - self.hallucination_rate) +

0.15 * self.attribution_accuracy

)

@property

def overall_score(self) -> float:

"""

Overall system quality.

Note: These are multiplicative because a failure at any layer

cascades to subsequent layers.

"""

return (

self.retrieval_score *

self.reasoning_score *

self.generation_score

)

def diagnose_failures(self) -> List[str]:

"""Identify which layers need improvement."""

issues = []

if self.retrieval_score < 0.7:

issues.append("RETRIEVAL: Low retrieval quality - check embeddings and graph construction")

if self.cross_repo_precision < 0.7:

issues.append(" - Cross-repo targeting is weak")

if self.graph_traversal_precision < 0.8:

issues.append(" - Graph edge traversal is missing relationships")

if self.reasoning_score < 0.7:

issues.append("REASONING: Poor information synthesis")

if self.context_coherence < 0.9:

issues.append(" - Version coherence issues in retrieved context")

if self.information_integration < 0.7:

issues.append(" - Not using multiple sources effectively")

if self.generation_score < 0.8:

issues.append("GENERATION: Output quality issues")

if self.hallucination_rate > 0.1:

issues.append(" - High hallucination rate - add grounding constraints")

if self.attribution_accuracy < 0.95:

issues.append(" - Citation accuracy needs improvement")

return issues

def run_layered_evaluation(

query: str,

system_response: str,

retrieved_chunks: List[RetrievedChunk],

gold_documents: List[str],

gold_paths: List[List[str]],

required_components: List[str],

test_cases: Optional[List[TestCase]],

llm_judge

) -> LayeredEvaluationResult:

"""

Run complete three-layer evaluation on a single query.

"""

# Layer 1: Retrieval

retrieved_docs = [f"{c.repo}/{c.path}" for c in retrieved_chunks]

ndcg = calculate_ndcg(retrieved_docs, gold_documents, k=10)

recall = calculate_recall(retrieved_docs, gold_documents, k=20)

mrr = calculate_mrr(retrieved_docs, gold_documents)

retrieved_paths = extract_paths_from_chunks(retrieved_chunks)

graph_precision = graph_traversal_precision(retrieved_paths, gold_paths)

relevant_repos = set(doc.split('/')[0] for doc in gold_documents)

cross_repo_prec = cross_repo_precision(

[{'repo': c.repo, 'path': c.path} for c in retrieved_chunks],

relevant_repos

)

# Layer 2: Reasoning

coherence = context_coherence_score(retrieved_chunks, {})

integration = information_integration_score(

system_response,

retrieved_chunks,

min_sources_expected=len(required_components)

)

aggregation_eval = evaluate_aggregation_challenge(

system_response,

required_components,

{}, # component descriptions

llm_judge

)

# Layer 3: Generation

groundedness_result = evaluate_groundedness(

system_response,

retrieved_chunks,

llm_judge

)

attribution_result = evaluate_attribution(

system_response,

retrieved_chunks

)

# Execution-based correctness if test cases provided

if test_cases:

exec_result = execute_generated_code(

extract_code_from_response(system_response),

test_cases,

{} # repository context mocks

)

factual_correctness = exec_result.pass_rate

else:

factual_correctness = aggregation_eval.accuracy_score

return LayeredEvaluationResult(

ndcg_at_10=ndcg,

recall_at_20=recall,

mrr=mrr,

cross_repo_precision=cross_repo_prec,

graph_traversal_precision=graph_precision,

context_coherence=coherence,

information_integration=integration,

completeness=aggregation_eval.completeness_score,

factual_correctness=factual_correctness,

groundedness=groundedness_result.groundedness_score,

hallucination_rate=groundedness_result.hallucination_rate,

attribution_accuracy=attribution_result.attribution_accuracy

)Target Thresholds Summary

| Layer | Metric | Target | Critical Threshold |

|---|---|---|---|

| Retrieval | NDCG@10 | > 0.75 | < 0.5 = severe |

| Recall@20 | > 0.85 | < 0.6 = severe | |

| MRR | > 0.6 | < 0.3 = severe | |

| Cross-Repo Precision | > 0.7 | < 0.5 = severe | |

| Graph Traversal Accuracy | > 0.8 | < 0.5 = severe | |

| Reasoning | Context Coherence | > 0.9 | < 0.7 = severe |

| Information Integration | > 0.7 | < 0.5 = severe | |

| Completeness | > 0.75 | < 0.5 = severe | |

| Generation | Factual Correctness | > 0.85 | < 0.7 = severe |

| Groundedness | > 0.9 | < 0.8 = severe | |

| Hallucination Rate | < 0.1 | > 0.2 = severe | |

| Attribution Accuracy | > 0.95 | < 0.85 = severe |

Key Takeaways

Layer separation enables diagnosis. When quality drops, you can immediately identify whether retrieval, reasoning, or generation is the bottleneck.

GraphRAG needs graph-aware metrics. Standard IR metrics miss relationship traversal quality. Add metrics that evaluate edge-following behavior.

Cross-repository adds unique dimensions. Repository-level precision, version coherence, and multi-source integration don’t exist in single-repo settings.

Multiplicative degradation is real. Poor retrieval doesn’t just hurt retrieval scores—it cascades through reasoning and generation. The overall score reflects this.

Thresholds should trigger alerts. Set up monitoring with critical thresholds. When any layer drops below critical, investigate immediately.

Next Steps

This framework gives you the metrics. The next post will cover how to build the evaluation dataset: constructing gold annotations for cross-repository queries at scale.

References

- Microsoft GraphRAG evaluation approaches

- Ragas framework for RAG assessment

- RagChecker fine-grained diagnostic framework

- CodeRAG-Bench retrieval-generation gap analysis