Building Your Evaluation Dataset from Organizational Repositories

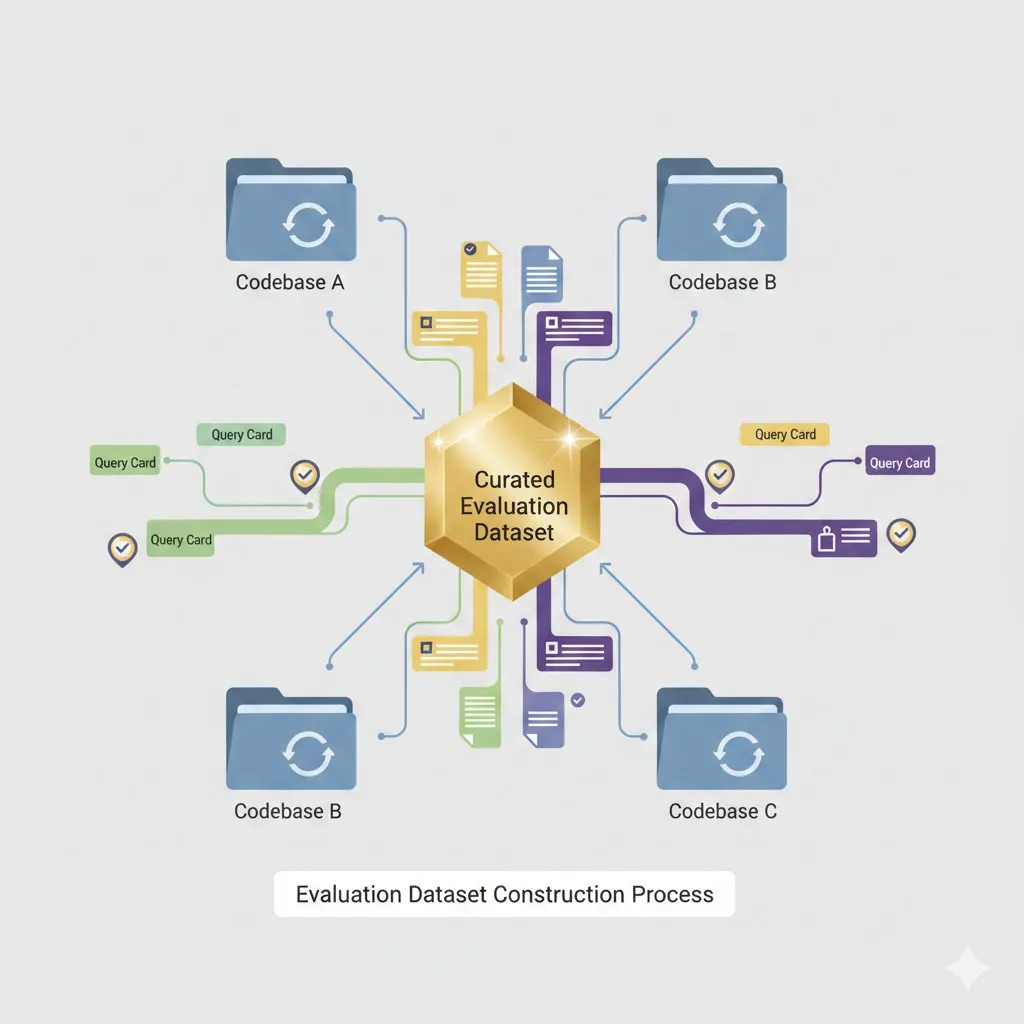

Getting RAG evaluation right starts with having the right dataset. Not a theoretical one. Not something scraped from public benchmarks. One built from your actual codebase, your real developer questions, and your specific cross-repository patterns.

This is where most teams skip steps and pay for it later. They grab some generic dataset, run evaluations, get numbers that look reasonable, and then wonder why their system fails on production queries. The disconnect happens because public benchmarks don’t reflect how your organization’s code actually connects.

Let me walk through how to build an evaluation dataset that actually tells you whether your retrieval system works.

What Makes a Good Evaluation Dataset

Your dataset needs four things to be useful.

Coverage across query types. Remember the query taxonomy from earlier—single-repo multi-file, cross-repo single concept, dependency chains, temporal queries? Your evaluation set needs examples of all of them. Heavy coverage of easy queries tells you nothing about where your system breaks.

Difficulty distribution that matches reality. Some queries are straightforward. Find a function, return its implementation. Others require tracing through five repositories to understand how a payment flows from frontend to database. Your dataset should reflect this spread.

Human-verified gold retrievals. This is non-negotiable. Someone who knows the codebase needs to confirm that yes, these are the files required to answer this question. LLM-generated “gold standards” introduce noise exactly where you need precision.

Version pinning. Code changes. If your evaluation queries reference files that have been refactored since annotation, your measurements become meaningless. Pin everything to specific commits.

How Many Queries Do You Actually Need

Here’s a rough breakdown that provides statistical reliability without requiring months of annotation work:

| Query Type | Training/Calibration | Evaluation | Total |

|---|---|---|---|

| Single-Repo Multi-File | 50 | 100 | 150 |

| Cross-Repo Single Concept | 30 | 75 | 105 |

| Cross-Repo Dependency Chain | 20 | 50 | 70 |

| Cross-Repo Temporal | 10 | 25 | 35 |

| Total | 110 | 250 | 360 |

Start with 250 evaluation queries minimum. Studies like CodeRAG-Bench use 100-500 queries per task type to get reliable signal. Below that threshold, random variation dominates your metrics.

The distribution skews toward easier queries intentionally. You need enough hard queries to measure edge cases, but not so many that annotation becomes prohibitively expensive.

Mining Real Questions from Your Development History

The best evaluation queries come from questions developers actually asked. They’re naturally phrased, they reflect real information needs, and the answers already exist somewhere in your documentation or discussions.

Pull Request Comments

PR comments are gold. When someone asks “why does this call the payment service here?” during review, they’re articulating exactly the kind of question your RAG system should answer.

def extract_pr_questions(repo: str, since: datetime) -> List[Query]:

"""

Find comments that asked for context or explanation.

"""

patterns = [

r"why (did|do|does|is|are|was|were)",

r"how (does|do|did|is|are)",

r"what (is|are|does|do|happens)",

r"where (is|are|does|do)",

r"can you explain",

r"I don't understand",

]

# Extract matching comments

# Pair with the files being reviewed (potential gold retrieval set)The files being reviewed give you a starting point for gold retrievals. The follow-up discussion often reveals what additional context was needed.

Code Review Discussions

These are especially valuable for cross-repository queries. A question like “Why does this call the payment service here?” probably touches:

- The file under review

- The payment service implementation

- Shared contracts or types between them

When the reviewer eventually says “ah, got it”—that’s your gold answer. The path from confusion to understanding maps directly to what your retrieval system needs to surface.

Onboarding Documentation Gaps

New developer questions reveal systematic knowledge gaps. These aren’t edge cases. They’re the questions every new team member asks because the answer spans multiple repositories and isn’t documented anywhere obvious.

Common patterns:

- “How do I add a new API endpoint?” (touches backend, frontend, possibly database migrations)

- “Where is authentication handled?” (security service, backend middleware, frontend state)

- “What happens when a user signs up?” (frontend, backend, email service, database)

These make excellent evaluation queries because they’re high-value (asked repeatedly) and inherently cross-repository.

Generating Queries Programmatically

Mining historical questions won’t give you complete coverage. You need to generate queries that exercise specific patterns in your codebase.

Dependency Chain Queries

High-connectivity files create natural query opportunities. If a file has many dependents, asking “what uses this?” produces queries with clear gold retrievals.

def generate_dependency_queries(dependency_graph: nx.DiGraph) -> List[Query]:

"""

Generate queries about cross-repo dependencies.

"""

queries = []

for source_file in dependency_graph.nodes():

dependents = list(dependency_graph.successors(source_file))

if len(dependents) > 3: # High-connectivity node

query = f"What services/components use {source_file.name}?"

gold_retrieval = [source_file] + dependents

queries.append(Query(

text=query,

gold_files=gold_retrieval,

query_type="dependency_chain"

))

return queriesThe dependency graph itself defines the gold retrieval set. No ambiguity about what files are relevant.

Schema Impact Queries

Database schema changes ripple through codebases in predictable ways. Every table has code that reads from it, writes to it, or defines models around it.

def generate_schema_impact_queries(sql_files: List[Path]) -> List[Query]:

"""

Generate queries about database schema dependencies.

"""

for schema_file in sql_files:

tables = extract_table_definitions(schema_file)

for table in tables:

# Find all code that references this table

referencing_files = find_table_references(table.name)

query = f"What code would be affected if I add a column to {table.name}?"

queries.append(Query(

text=query,

gold_files=[schema_file] + referencing_files,

query_type="impact_analysis"

))These queries test whether your retrieval system understands data flow—not just code structure, but how information moves through your system.

API Contract Queries

APIs create explicit boundaries between services. For each endpoint, there’s an implementation and consumers. Linking them tests cross-repository understanding.

def generate_api_queries(openapi_specs: List[Path]) -> List[Query]:

"""

Generate queries about API implementations and consumers.

"""

for spec in openapi_specs:

endpoints = parse_openapi(spec)

for endpoint in endpoints:

# Find backend implementation

implementation = find_endpoint_implementation(endpoint)

# Find frontend consumers

consumers = find_api_consumers(endpoint)

query = f"How is {endpoint.method} {endpoint.path} implemented and used?"

queries.append(Query(

text=query,

gold_files=[implementation] + consumers,

query_type="cross_repo_concept"

))The OpenAPI spec acts as a bridge—it tells you what should exist on both sides, making gold retrieval identification straightforward.

The Human Annotation Process

Programmatic generation gets you coverage. Human annotation gets you quality. For complex queries, there’s no substitute for engineers who know the codebase.

Week 1-2: Query Creation

Each annotator writes 20 queries based on their recent work. These should be questions that required them to look at multiple repositories. Document the mental model needed to answer—this becomes validation material later.

Week 2-3: Gold Retrieval Annotation

For each query, the annotator lists every file needed to answer it. Mark files as ESSENTIAL (the answer cannot be constructed without this) or HELPFUL (improves the answer but isn’t strictly required). Record dependency paths between files.

This distinction matters. ESSENTIAL files define your hard recall requirement. HELPFUL files tell you whether your ranking puts the most relevant results first.

Week 3-4: Gold Answer Generation

Annotators write the ideal response to each query. Responses must cite specific files and line numbers. Include the reasoning chain—how does the information from file A connect to file B to produce the answer?

This reasoning chain becomes your evaluation rubric for answer quality, not just retrieval accuracy.

Week 4-5: Cross-Validation

A second annotator attempts to answer each query using only the gold retrieval set. If they can’t construct the answer, the gold set is incomplete. If they construct a different answer, there’s ambiguity that needs resolution.

Target Cohen’s κ above 0.7 for inter-annotator agreement. Below that, your gold standard has too much noise to trust.

The Annotation Schema

Structure matters. Here’s what a complete annotation record looks like:

{

"query_id": "q_001",

"query_text": "How does the system handle payment failures?",

"query_type": "cross_repo_concept",

"gold_retrieval": {

"essential": [

{"repo": "payment-service", "path": "src/handlers/payment.py", "lines": "45-89"},

{"repo": "shared-types", "path": "src/errors.py", "lines": "12-34"},

{"repo": "frontend", "path": "src/components/PaymentForm.tsx", "lines": "78-120"}

],

"helpful": [

{"repo": "docs", "path": "architecture/payment-flow.md"}

]

},

"dependency_path": [

"frontend/PaymentForm.tsx --calls--> payment-service/payment.py",

"payment-service/payment.py --raises--> shared-types/errors.py",

"frontend/PaymentForm.tsx --handles--> shared-types/errors.py"

],

"gold_answer": "Payment failures are handled through a three-tier system...",

"annotator": "senior_eng_1",

"validator": "senior_eng_2",

"agreement_score": 0.85

}The dependency path field is particularly valuable. It documents the conceptual links between files—exactly what your retrieval system needs to learn.

Using LLMs to Scale Annotation

Human annotation doesn’t scale. For 360 queries with full annotation, you’re looking at significant engineering time. LLMs can help expand your dataset, but only with guardrails.

QUERY_AUGMENTATION_PROMPT = """

Given this code file and its cross-repository dependencies, generate 5 natural questions

a developer might ask that would require understanding all these files to answer.

File: {main_file_content}

Dependencies:

{dependency_files_content}

Requirements:

1. Questions should require information from multiple files

2. Questions should reflect realistic developer needs

3. Vary question types: how/why/what/where/impact

4. Include at least one question about error handling

5. Include at least one question about data flow

Generate questions in JSON format with reasoning about why each requires cross-repo context.

"""The key constraint: require the LLM to explain why each generated query needs the provided files. This reasoning can be spot-checked. If the reasoning is wrong, the query gets thrown out.

Sample 10% of synthetic queries for human verification. If more than 20% fail verification, your prompt needs refinement.

Common Pitfalls

Over-indexing on easy queries. Single-file lookups are easy to generate and annotate. Resist the temptation to fill your dataset with them. They won’t tell you anything interesting.

Ambiguous gold retrievals. Some queries have multiple valid file sets that could answer them. Document these alternatives rather than forcing a single “correct” answer.

Stale annotations. Code changes faster than annotation. Build update triggers—when annotated files change significantly, flag the query for re-validation.

Synthetic query drift. LLM-generated queries can drift toward patterns that are easy to generate but don’t reflect real developer needs. Keep them grounded with human review.

What You Should Have After This

A complete annotation guidelines document that any engineer can follow. Query generation scripts that exercise your specific codebase patterns. An annotation schema with validation rules. And most importantly, a target dataset composition that covers the query types your system will actually face.

This dataset becomes your ground truth. Every retrieval improvement, every chunking strategy change, every embedding model swap—you measure against this. Without it, you’re optimizing blind.