The Categories Anthropic Didn't Try to Eat

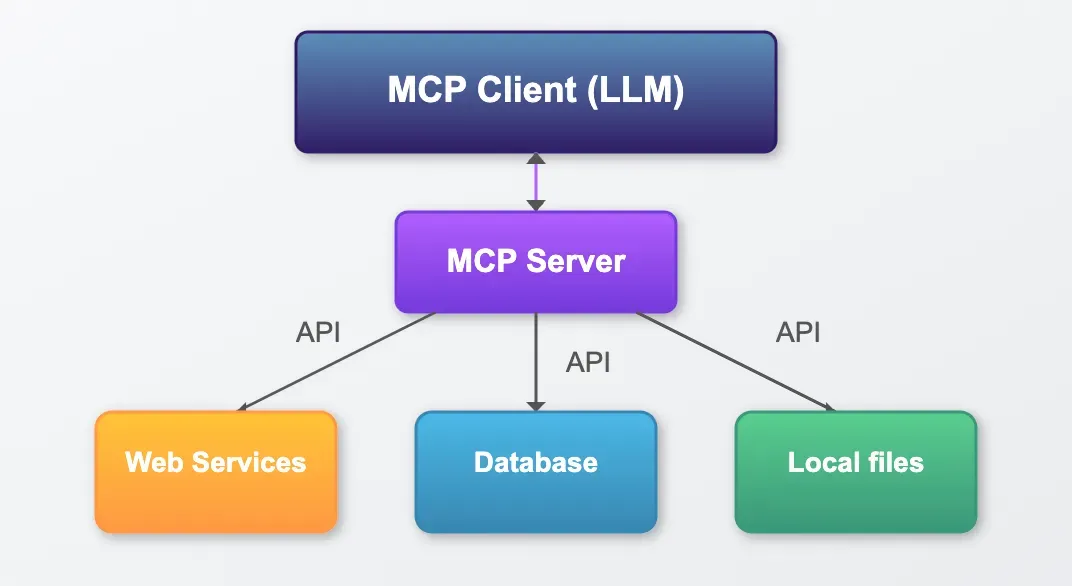

Anthropic shipped Claude Code, an MCP standard, and a frontier model, and then they deliberately stopped. This post maps the 5 adjacent categories Anthropic chose not to build, why they made that choice, and what it means for anyone building infrastructure underneath their agents.